2026 is the year when Data Mesh transitions from buzzword to reality. Companies are saying goodbye to monolithic data warehouses and embracing decentralized data products, real-time event streaming, and AI-powered analytics. The transformation has begun.

The Data Mesh Revolution: Why Centralized Architectures Fail

For years, companies tried to consolidate all data into a central data warehouse. The result: overloaded data teams, months-long waiting times for new reports, and data silos that nobody can navigate anymore.

Data Mesh reverses this architecture. Instead of centralization, it relies on four fundamental principles:

- Domain Ownership: Each business domain is responsible for its own data

- Data as a Product: Data is treated like products – with SLAs, documentation, and quality guarantees

- Self-Serve Platform: A central platform enables decentralized autonomy

- Federated Governance: Global standards, local implementation

"Data Mesh is not just a technical architecture – it's an organizational paradigm that changes how we think about data responsibility."

— Zhamak Dehghani, Creator of Data Mesh

Event Streaming with Apache Kafka: The Nervous System of Modern Data Architectures

Apache Kafka has established itself as the indispensable backbone for real-time analytics in 2026. With Kafka 4.0 and the complete replacement of ZooKeeper by KRaft, the platform is more mature than ever.

The Kafka Architecture 2026

# kafka-cluster-config.yaml

apiVersion: kafka.strimzi.io/v1beta2

kind: Kafka

metadata:

name: enterprise-data-mesh

spec:

kafka:

version: 4.0.0

replicas: 5

listeners:

- name: internal

port: 9092

type: internal

tls: true

- name: external

port: 9094

type: loadbalancer

tls: true

config:

auto.create.topics.enable: false

compression.type: zstd

num.partitions: 12

default.replication.factor: 3

min.insync.replicas: 2

storage:

type: persistent-claim

size: 2Ti

class: premium-ssd

kafkaExporter:

groupRegex: ".*"

topicRegex: ".*"Event-Driven Microservices Pattern

The classic request-response approach gives way to the event-driven pattern:

// order-service/src/events/order-placed.ts

import { Kafka, Partitioners } from 'kafkajs'

const kafka = new Kafka({

clientId: 'order-service',

brokers: process.env.KAFKA_BROKERS.split(','),

ssl: true,

sasl: {

mechanism: 'scram-sha-512',

username: process.env.KAFKA_USER,

password: process.env.KAFKA_PASSWORD,

},

})

const producer = kafka.producer({

createPartitioner: Partitioners.DefaultPartitioner,

idempotent: true,

transactionalId: 'order-transactions',

})

interface OrderPlacedEvent {

eventId: string

eventType: 'ORDER_PLACED'

timestamp: string

payload: {

orderId: string

customerId: string

items: Array<{ productId: string; quantity: number; price: number }>

totalAmount: number

currency: string

}

metadata: {

correlationId: string

source: string

version: string

}

}

export async function publishOrderPlaced(order: Order): Promise<void> {

const event: OrderPlacedEvent = {

eventId: crypto.randomUUID(),

eventType: 'ORDER_PLACED',

timestamp: new Date().toISOString(),

payload: {

orderId: order.id,

customerId: order.customerId,

items: order.items,

totalAmount: order.total,

currency: 'CHF',

},

metadata: {

correlationId: order.correlationId,

source: 'order-service',

version: '2.0.0',

},

}

await producer.send({

topic: 'commerce.orders.placed',

messages: [

{

key: order.customerId,

value: JSON.stringify(event),

headers: {

'event-type': 'ORDER_PLACED',

'content-type': 'application/json',

},

},

],

})

}Decentralized Data Products: The Heart of Data Mesh

A data product is more than just a table or dataset. It's a standalone, autonomous artifact with clear interfaces:

| Component | Description | Example |

|---|---|---|

| Input Ports | Data sources and events | Kafka Topics, APIs, Databases |

| Transformation | Business Logic | dbt Models, Spark Jobs |

| Output Ports | Consumable interfaces | REST APIs, SQL Views, Parquet Files |

| Data Contract | Schema and SLAs | JSON Schema, OpenAPI, Data Quality Rules |

| Observability | Monitoring and lineage | Metrics, Logs, Data Lineage Graph |

Data Contract Example

# data-products/customer-360/contract.yaml

dataProduct:

name: customer-360

domain: marketing

owner: marketing-data-team

version: 3.2.0

description: |

Unified customer view combining CRM, transactions,

and behavioral data for personalization use cases.

schema:

type: object

properties:

customerId:

type: string

format: uuid

description: Unique customer identifier

segment:

type: string

enum: [premium, standard, new, churning]

lifetimeValue:

type: number

minimum: 0

description: Predicted customer lifetime value in CHF

lastActivity:

type: string

format: date-time

preferences:

type: object

properties:

language: { type: string }

channels: { type: array, items: { type: string } }

sla:

freshness: PT15M # Max 15 minutes delay

availability: 99.9%

qualityScore: 0.95

access:

classification: internal

requiredPermissions:

- marketing:read

- analytics:readSnowflake & dbt: The Modern Data Stack 2026

The combination of Snowflake as Cloud Data Platform and dbt as Transformation Layer dominates the enterprise market in 2026. New additions include native AI feature integration.

dbt with Snowflake Cortex

-- models/marts/customer_insights.sql

${{ config(

materialized='incremental',

unique_key='customer_id',

cluster_by=['segment', 'region'],

tags=['customer', 'ml']

) }}

WITH customer_base AS (

SELECT

customer_id,

email,

created_at,

total_orders,

total_revenue,

last_order_date

FROM ${{ ref('stg_customers') }}

),

-- Snowflake Cortex AI: Sentiment Analysis

support_sentiment AS (

SELECT

customer_id,

AVG(

SNOWFLAKE.CORTEX.SENTIMENT(ticket_text)

) AS avg_sentiment_score

FROM ${{ ref('stg_support_tickets') }}

WHERE created_at >= DATEADD(month, -3, CURRENT_DATE)

GROUP BY customer_id

),

-- Snowflake Cortex AI: Churn Prediction

churn_prediction AS (

SELECT

customer_id,

SNOWFLAKE.CORTEX.COMPLETE(

'claude-3-opus',

CONCAT(

'Based on this customer data, predict churn risk (low/medium/high): ',

TO_VARCHAR(customer_data)

)

) AS churn_risk

FROM customer_features

),

-- Segmentation with Clustering

segmentation AS (

SELECT

customer_id,

CASE

WHEN total_revenue > 10000 AND order_frequency > 12 THEN 'premium'

WHEN days_since_last_order > 180 THEN 'churning'

WHEN created_at > DATEADD(month, -6, CURRENT_DATE) THEN 'new'

ELSE 'standard'

END AS segment

FROM customer_metrics

)

SELECT

c.customer_id,

c.email,

s.segment,

c.total_revenue AS lifetime_value,

c.total_orders,

COALESCE(ss.avg_sentiment_score, 0) AS support_sentiment,

cp.churn_risk,

CURRENT_TIMESTAMP AS updated_at

FROM customer_base c

LEFT JOIN segmentation s ON c.customer_id = s.customer_id

LEFT JOIN support_sentiment ss ON c.customer_id = ss.customer_id

LEFT JOIN churn_prediction cp ON c.customer_id = cp.customer_id

{% if is_incremental() %}

WHERE c.updated_at > (SELECT MAX(updated_at) FROM ${{ this }})

{% endif %}dbt Tests and Data Quality

# models/marts/schema.yml

version: 2

models:

- name: customer_insights

description: "Customer 360 view with AI-powered insights"

config:

contract:

enforced: true

columns:

- name: customer_id

data_type: varchar

constraints:

- type: not_null

- type: primary_key

tests:

- unique

- not_null

- name: segment

data_type: varchar

tests:

- accepted_values:

values: ['premium', 'standard', 'new', 'churning']

- name: lifetime_value

data_type: number

tests:

- not_null

- dbt_utils.accepted_range:

min_value: 0

inclusive: true

tests:

- dbt_utils.recency:

datepart: hour

field: updated_at

interval: 1Apache Spark 4.0: Streaming and Batch United

With Spark 4.0, the boundary between batch and stream processing finally disappears. Spark Connect also enables complete decoupling of client and server.

# analytics/realtime_aggregations.py

from pyspark.sql import SparkSession

from pyspark.sql.functions import (

window, col, sum, count, avg,

from_json, to_json, current_timestamp

)

from pyspark.sql.types import StructType, StructField, StringType, DoubleType

# Spark Connect: Remote Session

spark = SparkSession.builder \

.remote("sc://spark-cluster.internal:15002") \

.appName("RealTimeAnalytics") \

.config("spark.sql.streaming.stateStore.providerClass",

"org.apache.spark.sql.execution.streaming.state.RocksDBStateStoreProvider") \

.getOrCreate()

# Schema for incoming events

order_schema = StructType([

StructField("orderId", StringType()),

StructField("customerId", StringType()),

StructField("amount", DoubleType()),

StructField("currency", StringType()),

StructField("timestamp", StringType()),

StructField("region", StringType()),

])

# Kafka Source - Real-Time Stream

orders_stream = spark \

.readStream \

.format("kafka") \

.option("kafka.bootstrap.servers", "kafka.internal:9092") \

.option("subscribe", "commerce.orders.placed") \

.option("startingOffsets", "latest") \

.option("kafka.security.protocol", "SASL_SSL") \

.load() \

.select(from_json(col("value").cast("string"), order_schema).alias("order")) \

.select("order.*")

# Windowed Aggregations

revenue_per_region = orders_stream \

.withWatermark("timestamp", "10 minutes") \

.groupBy(

window(col("timestamp"), "5 minutes", "1 minute"),

col("region")

) \

.agg(

sum("amount").alias("total_revenue"),

count("orderId").alias("order_count"),

avg("amount").alias("avg_order_value")

)

# Output to Delta Lake and Kafka

query = revenue_per_region \

.writeStream \

.format("delta") \

.outputMode("update") \

.option("checkpointLocation", "/data/checkpoints/revenue") \

.partitionBy("region") \

.toTable("analytics.realtime_revenue")

# Parallel: Alerts to Kafka

alerts_query = revenue_per_region \

.filter(col("order_count") > 1000) \

.select(to_json(struct("*")).alias("value")) \

.writeStream \

.format("kafka") \

.option("kafka.bootstrap.servers", "kafka.internal:9092") \

.option("topic", "analytics.alerts.high-volume") \

.option("checkpointLocation", "/data/checkpoints/alerts") \

.start()

query.awaitTermination()AI-Powered Insights: From Data to Decisions

In 2026, analytics without AI is almost unimaginable. The integration of Large Language Models into analytics workflows enables entirely new use cases:

- Natural Language Queries: "Show me the top customers in Zurich with declining revenues"

- Automated Insights: AI detects anomalies and generates explanations

- Predictive Analytics: Churn prediction, demand forecasting, fraud detection

- Data Quality Automation: Automatic detection and correction of data errors

Text-to-SQL with Claude

// analytics-api/src/natural-language-query.ts

import Anthropic from '@anthropic-ai/sdk'

import { db } from './database'

const anthropic = new Anthropic()

interface QueryResult {

sql: string

explanation: string

data: Record<string, unknown>[]

visualization: 'table' | 'bar' | 'line' | 'pie'

}

export async function executeNaturalLanguageQuery(

question: string,

context: { tables: string[]; userRole: string }

): Promise<QueryResult> {

// Load schema information

const schemaInfo = await getSchemaForTables(context.tables)

const response = await anthropic.messages.create({

model: 'claude-sonnet-4-20250514',

max_tokens: 2000,

system: `You are a SQL expert for Snowflake.

Generate only safe SELECT statements.

Available tables and schemas:

${schemaInfo}

Always respond in JSON format:

{

"sql": "SELECT ...",

"explanation": "This query...",

"visualization": "table|bar|line|pie"

}`,

messages: [

{

role: 'user',

content: question,

},

],

})

const result = JSON.parse(response.content[0].text)

// SQL Injection Prevention

if (!isSafeQuery(result.sql)) {

throw new Error('Unsafe query detected')

}

// Execute query

const data = await db.execute(result.sql)

return {

sql: result.sql,

explanation: result.explanation,

data,

visualization: result.visualization,

}

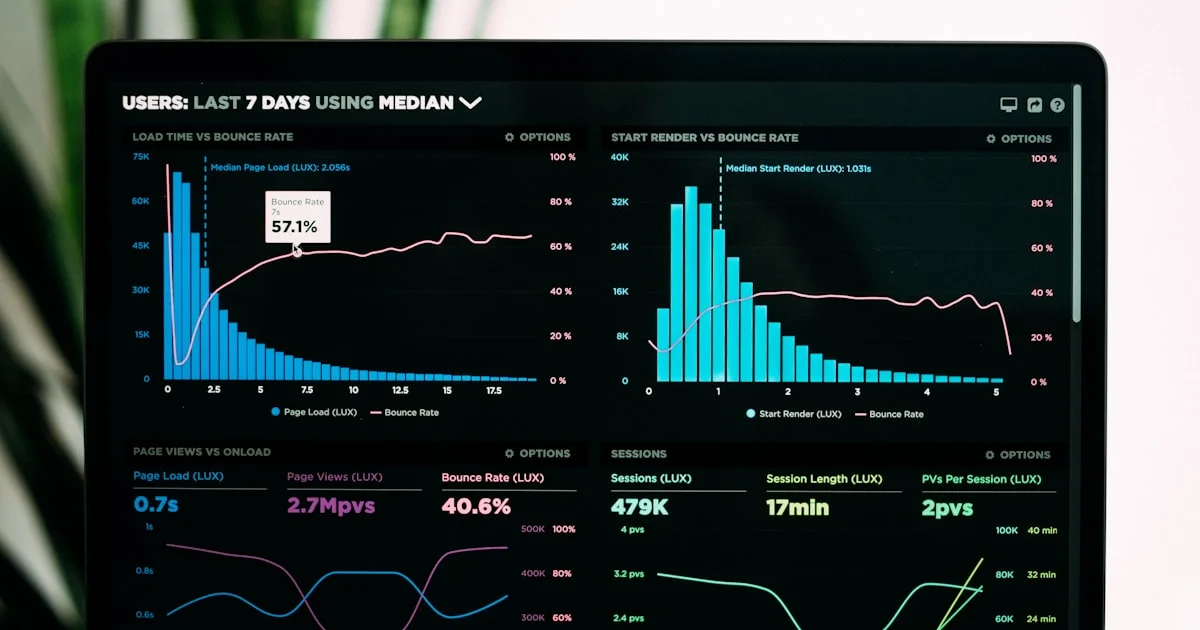

}BI Dashboards & Visualization: The Last Mile

The best data architecture is useless if insights don't reach decision-makers. In 2026, leading tools focus on Embedded Analytics and Self-Service BI.

Tool Comparison 2026

| Tool | Strengths | Ideal for | Price (CHF/User/Month) |

|---|---|---|---|

| Tableau | Visualization, Enterprise Features | Large teams, complex dashboards | 70-150 |

| Looker | Semantic Layer, Git Integration | Data Teams, Embedding | 60-120 |

| Metabase | Open Source, Self-Service | Startups, Self-Hosting | 0-85 |

| Superset | Open Source, SQL-First | Technical Teams | 0 (Self-Hosted) |

| Sigma | Spreadsheet UI, Cloud-Native | Business Users | 50-100 |

Embedded Analytics Example

// components/EmbeddedDashboard.tsx

'use client'

import { useEffect, useState } from 'react'

import { LookerEmbedSDK } from '@looker/embed-sdk'

interface DashboardProps {

dashboardId: string

filters?: Record<string, string>

}

export function EmbeddedDashboard({ dashboardId, filters }: DashboardProps) {

const [dashboard, setDashboard] = useState<LookerDashboard | null>(null)

useEffect(() => {

LookerEmbedSDK.init('https://analytics.mazdek.ch', {

auth_url: '/api/looker/auth',

})

LookerEmbedSDK.createDashboardWithId(dashboardId)

.withFilters(filters || {})

.withTheme('mazdek_dark')

.appendTo('#dashboard-container')

.build()

.connect()

.then(setDashboard)

}, [dashboardId, filters])

const handleExport = async () => {

if (dashboard) {

await dashboard.downloadPdf()

}

}

return (

<div className="dashboard-wrapper">

<div className="dashboard-toolbar">

<button onClick={handleExport}>PDF Export</button>

<button onClick={() => dashboard?.refresh()}>Refresh</button>

</div>

<div id="dashboard-container" className="h-[600px]" />

</div>

)

}Implementation Roadmap: From 0 to Data Mesh

A Data Mesh transformation doesn't happen overnight. Here's a realistic roadmap:

Phase 1: Foundation (3-6 Months)

- Build Self-Serve Data Platform (Snowflake, dbt Cloud)

- Set up Kafka Cluster for Event Streaming

- Define Data Governance Framework

- First pilot data product with one domain team

Phase 2: Scale (6-12 Months)

- Onboard 3-5 additional data products

- Establish Data Contracts as standard

- Implement BI tool strategy

- Implement Data Quality Monitoring

Phase 3: Optimize (12-18 Months)

- AI-powered analytics features

- Expand real-time use cases

- Refine Federated Governance

- Cost Optimization and FinOps

Conclusion: Data as Strategic Competitive Advantage

Data Mesh, Real-Time Analytics, and AI-powered Insights are no longer optional nice-to-haves in 2026 – they are business-critical capabilities. Companies that invest now secure decisive competitive advantages:

- Faster Decisions: From days to seconds

- Better Data Quality: Through decentralized responsibility

- Scalable Architecture: Growth without bottlenecks

- AI-Readiness: Solid data foundation for ML and GenAI

At mazdek, we guide companies on their journey to becoming data-driven organizations – from strategy through architecture to implementation.